How do these social implications influence our design choices? UX designers are tasked with optimizing the user experience. I’d be interested to see additional research on how AI abuse affects our communications with others, especially considering the prevalence of female AI.

While results vary amongst the research, some studies have concluded that playing long-term exposure to sex-typed video games are correlated with greater tolerance of sexual harassment and rape myth acceptance. In a similar scenario, studies have examined the influence of violence and sexual objectification of women in video games on rape myth acceptance, or the internalization of attitudes that justify or excuse rape. Brahnam has stated that “If we’re practicing abusing agents of different types, then I think it lends itself to real world abuse.” It is troubling if the harassment of female chatbots may contribute to the already widespread issue of sexual harassment towards women. Brahnam’s research found that users direct more sexual and profane comments towards female-presenting chatbots than male presenting chatbots. In my opinion, though, these female virtual assistants perpetuate the stereotype that women are subservient. Research shows that across cultures, we affiliate qualities such as kindness, helpfulness, warmth, and communicative, which may be why people prefer female voices for their chatbots. While some identify as gender-neutral, the default voice is typically that of a woman. With the emergence of AI virtual assistants, like Siri, Alexa, and Google Home, the majority of the personas are female. If our behavior towards AI may be mimicked by our children and influences our treatment of each other, there could be significant implications for gender dynamics. If our behavior towards AI may be mimicked by our children and influences our treatment of each other, there could be significant implications for gender dynamics This might be most dangerous to our children, who learn by imitating those around them. While we might not be able to offend Siri or Alexa, we can definitely offend those who overhear us. Therefore, practicing this behavior towards virtual assistants could bleed into our interactions with other beings. However, studies have shown that venting does not reduce emotion, but rehearses it. People who are encouraged to express their anger are actually more aggressive in their interaction with others. We may argue that abusing AI is a way of letting off steam on an inanimate object, rather than an actual person. An article in the Harvard Business Review suggests that yelling at technology represents poor leadership. Since AIs lack emotional capability, does that mean these actions are harmless? Though abusing a virtual assistant may seem innocuous, this behavior reflects back on our character. One purpose of verbal abuse is to cause harm. Justine Cassell, Professor of Human-Computer Interaction at Carnegie Mellon, states that “The more human-like a system acts, the broader the expectations that people may have for it.” As virtual assistants do not visually indicate their functionality, we may assume they have more abilities than they actually do or probe to better understand those limitations. Artificial intelligence may be crossing into the realm of Uncanny Valley, a phenomenon where a design that is similar, but not identical, to a human being causes a very negative response to this simulated likeness. When speaking to a bot at a call center, raising our voices often gets us to a human representative more quickly, as it can be frustrating to deal with a computer.

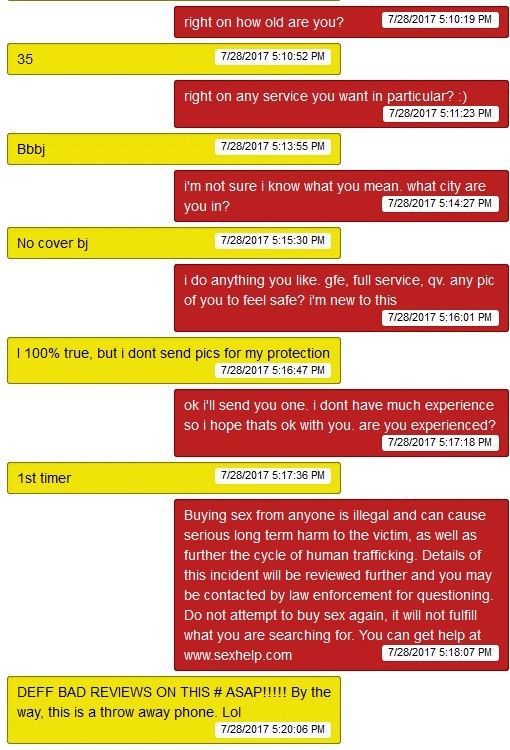

To address this problem, we first need to understand why people are mean to virtual assistants. While UX designers strive to create the ideal experience, what does it say about our society if cruelty is tolerated and widespread? What is our ethical responsibility as designers to accommodate or not? Many in the UX field are now considering hedonomics, or the “branch of science and design devoted to the promotion of pleasurable human-technology interaction”. Sheryl Brahnam, Assistant Professor in Computer Information Systems at Missouri State University, 10%-50% of our interactions with conversational agents (CAs) are abusive.ġ0%-50% of our interactions with conversational agents (CAs) are abusive. When faced with unfamiliar artificial intelligence (AI) in the form of chatbots, from AIM’s SmarterChild to Apple’s Siri, humans try to push the boundaries of its capability. We probably all have our own stories of asking inappropriate questions or saying mean things to chatbots. Sometimes, these defamations were unsolicited, with people sending insults before ever asking a technical question. While fielding questions from customers through online help, my coworkers and I would be cursed out by people who mistook us as chatbot or virtual assistants.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed